Choose Payment Option

Pay at store or pay when you receive the items.

Classification is a technique to determine which fixed set of classes does an object belongs to. There are 2 steps involved in classification:

A One R classifier generates a set of rules that all test on one particular attribute. It builds one rule for each attribute in the training data and then selects the rule with the smallest error. It treats all numerically valued features as continuous and uses a straightforward method to divide the range of values into several disjoint intervals

How to choose the attribute to be used for the rule:

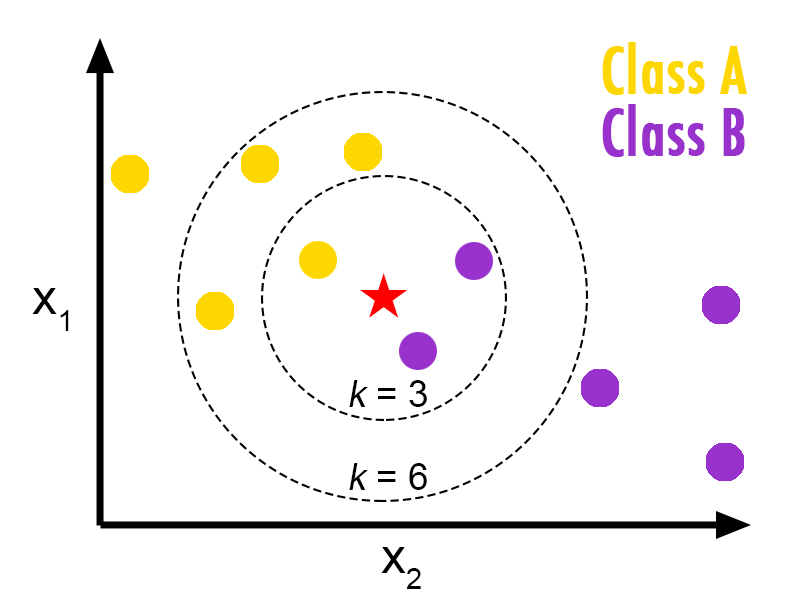

K nearest neighbor is a classification algorithm in which the nearest neighbor of an object is calculated on the basis of value of k, that specifies how many nearest neighbors are to be considered to define class of a sample data point.

Finds a group of k objects in the training set that are closest to the test object, and bases the assignment of a label on the predominance of a particular class in this neighborhood. There are three key elements of this approach: a set of labeled objects, e.g., a set of stored records, a distance or similarity metric to compute distance between objects, and the value of k, the number of nearest neighbors.

To classify an unlabeled object, the distance of this object to the labeled objects is computed, its k-nearest neighbors are identified, and the class labels of these nearest neighbors are then used to determine the class label of the object.

A decision tree is a flow-chart-like tree structure, where the internal node denotes a test on an attribute, the branch represents an outcome of the test, and the leaf nodes represent class labels or class distribution.

The Decision Tree consists of 2 phases:

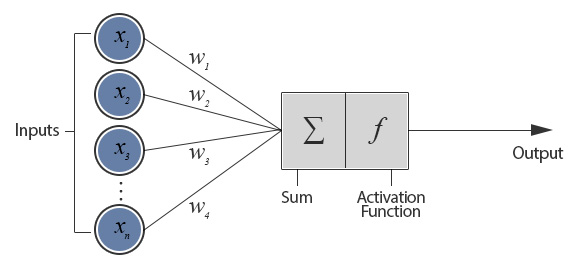

Artificial Neural Network is a network inspired by biological neural networks.

A neural network is a set of connected input/output units in which each connection has a weight associated with it. During the learning phase, the network learns by adjusting the weights so as to able to predict the correct class label of the input.

The Artificial Neural Network consists of 2 phases:

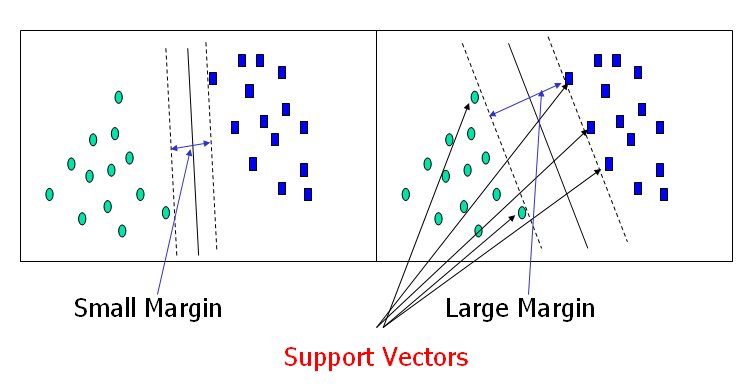

The aim of SVM is to find the best classification function to distinguish between members of the two classes in the training data. The metric for the concept of the “best” classification function can be realized geometrically.

For a linearly separable dataset, a linear classification function corresponds to a separating hyperplane f (x) that passes through the middle of the two classes, separating the two. Once this function is determined, new data instance xn can be classified by simply testing the sign of the function f (xn); xn belongs to the positive class if f (xn) > 0.

The best function is found by maximizing the margin between the two classes. The reason why SVM insists on finding the maximum margin hyperplanes is that it offers the best generalization ability. It allows not only the best classification performance (e.g., accuracy) on the training data, but also leaves much room for the correct classification of the future data.

Pinky S., P., Patel, R., J. Patel, A., & Joshi, M. (2015). Review on Classification Algorithms in Data Mining. International Journal of Emerging Technology and Advanced Engineering, 5(1), 593–595.

Wu, X., Kumar, V., Quinlan, J. R., Ghosh, J., Yang, Q., Motoda, H., … Steinberg, D. (2007). Top 10 algorithms in data mining.. doi:10.1007/s10115-007-0114-2

| Name | Comments | Date |

|---|